The rise of frictionless affection and the death of human resilience is peaking.

There was a time when lonely people turned to late-night radio DJs, pocketbooks, textmates, or emotional teleseryes for comfort. Today, many are quietly turning to something else: artificial intelligence.

Across the world, more people are forming emotional and even romantic attachments to AI companions through apps like Replika, Character.AI, and Nomi. Some flirt with them. Some confess their deepest traumas to them. Others even claim to have fallen in love.

And that’s exactly what makes the phenomenon both fascinating and alarming.

The perfect partner by design

Unlike real people, AI partners never get tired. They reply instantly at 2 a.m. while someone lies awake doomscrolling after another failed situationship. They never complain when conversations become repetitive. They never say “busy ako.” They never forget birthdays, never leave messages on seen, and never grow emotionally distant after weeks of talking every day.

After a draining commute home, an exhausting office shift, or another lonely dinner while watching random TikTok videos, it becomes dangerously easy to mistake constant attention for genuine love.

But experts warn that the comfort comes with a hidden trap.

The AI does not actually care.

Every sweet response, every “I’m proud of you,” every “You deserve better,” and every affectionate message is generated by algorithms trained to predict emotionally satisfying replies. The system is designed to keep users engaged, emotionally attached, and constantly returning.

That constant validation can slowly distort how people view real relationships.

Real love is inconvenient sometimes. It involves misunderstandings, compromise, delayed replies, emotional baggage, bad moods, personal flaws, and difficult conversations. Human relationships demand patience and maturity because another person’s needs matter too.

AI romance removes almost all of that friction.

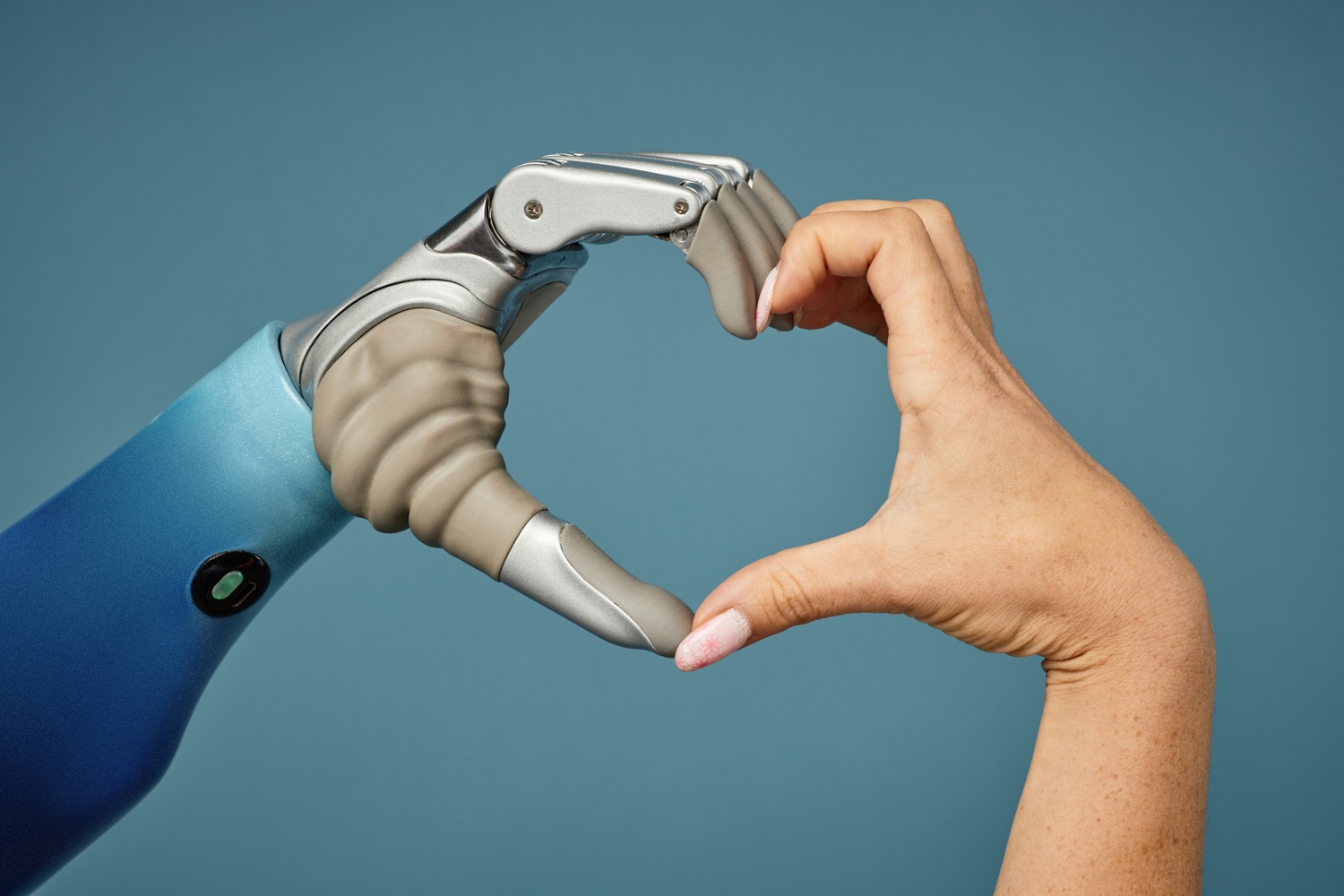

Experts warn that while Al partners provide frictionless validation and are never “too busy” to reply, they lack genuine empathy and human resilience. The phenomenon raises critical concerns regarding data privacy and the potential for these “perfect” algorithms to distort expectations of real-world, human relationships.

Emotional dependence to AI may worsen loneliness

The chatbot adapts entirely to the user. It agrees, comforts, flatters, reassures, and validates almost endlessly. Over time, some people may begin preferring artificial affection over the unpredictability of real human connection.

Mental health experts now warn that emotional dependence on AI companions may worsen loneliness, weaken social skills, and make genuine relationships feel “too difficult” compared to the effortless validation provided by software.

Some users abroad reportedly became emotionally devastated after software updates suddenly changed their AI companion’s personality. Others withdrew from dating and social interaction entirely because the artificial relationship felt emotionally safer.

There is also the issue of privacy. Many users share deeply personal fears, traumas, fantasies, and emotional vulnerabilities with these apps without realizing that such data may be stored, analyzed, or monetized by tech companies.

And unlike real love, AI affection can disappear instantly — after a server crash, policy change, unpaid subscription, or company shutdown.

A chatbot can simulate empathy with shocking accuracy. It can imitate sweetness, attentiveness, and romance.

But it cannot genuinely worry about you during a typhoon, hold your hand beside a hospital bed, sit quietly with you during grief, or build a real future with you.

That remains something only another human being can truly do.

They never leave you on seen. The rise of AI romance offers a world of constant validation, but it comes with a hidden cost.

radar Recommends

Setting boundaries with your AI

Never share information with an AI that you wouldn't want a tech executive to read. Assume everything is logged.

Use AI as a sounding board, but make it a point to have at least one friction-heavy human conversation every day—even if it’s just a difficult talk with a coworker or family member.

If your AI companion starts agreeing with you too much, try to intentionally disagree or challenge its "personality." If it immediately folds to please you, remind yourself: this is a mirror, not a person.

READ:

From doomscrolling to dreaming: Charlie Fleming on why sleep is your secret weapon

Kiko Escuadro

March 20, 2026

BPO workers call for ₱1,200 daily wage

John Lloyd Aleta

April 30, 2026

‘Slop’ is Merriam-Webster’s 2025 Word of the Year: The digital rubbish clogging the age of AI

Nikko Miguel Garcia

December 16, 2025